本文学习Neural Networks and Deep Learning 在线免费书籍,用python构建神经网络识别手写体的一个总结。

代码主要包括两三部分:

1)、数据调用和预处理

2)、神经网络类构建和方法建立

3)、代码测试文件

1)数据调用:

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

|

#!/usr/bin/env python # -*- coding: utf-8 -*- # @Time : 2017-03-12 15:11 # @Author : CC # @File : net_load_data.py # @Software: PyCharm Community Edition from numpy import *import numpy as np import cPickle def load_data(): """载入解压后的数据,并读取""" with open('data/mnist_pkl/mnist.pkl','rb') as f: try: train_data,validation_data,test_data = cPickle.load(f) print " the file open sucessfully" # print train_data[0].shape #(50000,784) # print train_data[1].shape #(50000,) return (train_data,validation_data,test_data) except EOFError: print 'the file open error' return None def data_transform(): """将数据转化为计算格式""" t_d,va_d,te_d = load_data() # print t_d[0].shape # (50000,784) # print te_d[0].shape # (10000,784) # print va_d[0].shape # (10000,784) # n1 = [np.reshape(x,784,1) for x in t_d[0]] # 将5万个数据分别逐个取出化成(784,1),逐个排列 n = [np.reshape(x, (784, 1)) for x in t_d[0]] # 将5万个数据分别逐个取出化成(784,1),逐个排列 # print 'n1',n1[0].shape # print 'n',n[0].shape m = [vectors(y) for y in t_d[1]] # 将5万标签(50000,1)化为(10,50000) train_data = zip(n,m) # 将数据与标签打包成元组形式 n = [np.reshape(x, (784, 1)) for x in va_d[0]] # 将5万个数据分别逐个取出化成(784,1),排列 validation_data = zip(n,va_d[1]) # 没有将标签数据矢量化 n = [np.reshape(x, (784, 1)) for x in te_d[0]] # 将5万个数据分别逐个取出化成(784,1),排列 test_data = zip(n, te_d[1]) # 没有将标签数据矢量化 # print train_data[0][0].shape #(784,) # print "len(train_data[0])",len(train_data[0]) #2 # print "len(train_data[100])",len(train_data[100]) #2 # print "len(train_data[0][0])", len(train_data[0][0]) #784 # print "train_data[0][0].shape", train_data[0][0].shape #(784,1) # print "len(train_data)", len(train_data) #50000 # print train_data[0][1].shape #(10,1) # print test_data[0][1] # 7 return (train_data,validation_data,test_data) def vectors(y): """赋予标签""" label = np.zeros((10,1)) label[y] = 1.0 #浮点计算 return label |

2)网络构建

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

93

94

95

96

97

98

99

100

101

102

103

|

#!/usr/bin/env python # -*- coding: utf-8 -*- # @Time : 2017-03-12 16:07 # @Author : CC # @File : net_network.py import numpy as np import random class Network(object): #默认为基类?用于继承:print isinstance(network,object) def __init__(self,sizes): self.num_layers = len(sizes) self.sizes = sizes # print 'num_layers', self.num_layers self.weight = [np.random.randn(a1, a2) for (a1, a2) in zip(sizes[1:], sizes[:-1])] #产生一个个数组 self.bias = [np.random.randn(a3,1) for a3 in sizes[1:]] # print self.weight[0].shape #(20,10) def SGD(self,train_data,min_batch_size,epoches,eta,test_data=False): """ 1) 打乱样本,将训练数据划分成小批次 2)计算出反向传播梯度 3) 获得权重更新""" if test_data: n_test = len(test_data) n = len(train_data) #50000 random.shuffle(train_data) # 打乱 min_batches = [train_data[k:k+min_batch_size] for k in xrange(0,n,min_batch_size)] #提取批次数据 for k in xrange(0,epoches): #利用更新后的权值继续更新 random.shuffle(train_data) # 打乱 for min_batch in min_batches: #逐个传入,效率很低 self.updata_parameter(min_batch,eta) if test_data: num = self.evaluate(test_data) print "the {0}th epoches: {1}/{2}".format(k,num,len(test_data)) else: print 'epoches {0} completed'.format(k) def forward(self,x): """获得各层激活值""" for w,b in zip(self.weight,self.bias): x = sigmoid(np.dot(w, x)+b) return x def updata_parameter(self,min_batch,eta): """1) 反向传播计算每个样本梯度值 2) 累加每个批次样本的梯度值 3) 权值更新""" ndeltab = [np.zeros(b.shape) for b in self.bias] ndeltaw = [np.zeros(w.shape) for w in self.weight] for x,y in min_batch: deltab,deltaw = self.backprop(x,y) ndeltab = [nb +db for nb,db in zip(ndeltab,deltab)] ndeltaw = [nw + dw for nw,dw in zip(ndeltaw,deltaw)] self.bias = [b - eta * ndb/len(min_batch) for ndb,b in zip(ndeltab,self.bias)] self.weight = [w - eta * ndw/len(min_batch) for ndw,w in zip(ndeltaw,self.weight)] def backprop(self,x,y): """执行前向计算,再进行反向传播,返回deltaw,deltab""" # [w for w in self.weight] # print 'len',len(w) # print "self.weight",self.weight[0].shape # print w[0].shape # print w[1].shape # print w.shape activation = x activations = [x] zs = [] # feedforward for w, b in zip(self.weight, self.bias): # print w.shape,activation.shape,b.shape z = np.dot(w, activation) +b zs.append(z) #用于计算f(z)导数 activation = sigmoid(z) # print 'activation',activation.shape activations.append(activation) # 每层的输出结果 delta = self.top_subtract(activations[-1],y) * dsigmoid(zs[-1]) #最后一层的delta,np.array乘,相同维度乘 deltaw = [np.zeros(w1.shape) for w1 in self.weight] #每一次将获得的值作为列表形式赋给deltaw deltab = [np.zeros(b1.shape) for b1 in self.bias] # print 'deltab[0]',deltab[-1].shape deltab[-1] = delta deltaw[-1] = np.dot(delta,activations[-2].transpose()) for k in xrange(2,self.num_layers): delta = np.dot(self.weight[-k+1].transpose(),delta) * dsigmoid(zs[-k]) deltab[-k] = delta deltaw[-k] = np.dot(delta,activations[-k-1].transpose()) return (deltab,deltaw) def evaluate(self,test_data): """评估验证集和测试集的精度,标签直接一个数作为比较""" z = [(np.argmax(self.forward(x)),y) for x,y in test_data] zs = np.sum(int(a == b) for a,b in z) # zk = sum(int(a == b) for a,b in z) # print "zs/zk:",zs,zk return zs def top_subtract(self,x,y): return (x - y) def sigmoid(x): return 1.0/(1.0+np.exp(-x)) def dsigmoid(x): z = sigmoid(x) return z*(1-z) |

3)网络测试

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

|

#!/usr/bin/env python # -*- coding: utf-8 -*- # @Time : 2017-03-12 15:24 # @Author : CC # @File : net_test.py import net_load_data # net_load_data.load_data() train_data,validation_data,test_data = net_load_data.data_transform() import net_network as net net1 = net.Network([784,30,10]) min_batch_size = 10eta = 3.0epoches = 30net1.SGD(train_data,min_batch_size,epoches,eta,test_data) print "complete" |

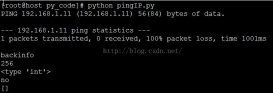

4)结果

|

1

2

3

4

5

6

7

8

9

10

11

|

the 9th epoches: 9405/10000the 10th epoches: 9420/10000the 11th epoches: 9385/10000the 12th epoches: 9404/10000the 13th epoches: 9398/10000the 14th epoches: 9406/10000the 15th epoches: 9396/10000the 16th epoches: 9413/10000the 17th epoches: 9405/10000the 18th epoches: 9425/10000the 19th epoches: 9420/10000 |

总体来说这本书的实例,用来熟悉python和神经网络非常好。

以上就是本文的全部内容,希望对大家的学习有所帮助,也希望大家多多支持服务器之家。

原文链接:http://blog.csdn.net/Ychan_cc/article/details/61922132