|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

93

94

95

96

97

98

99

100

101

|

import requestsimport reimport jsonimport ossession = requests.session()def fetch_url(url): return session.get(url).content.decode('gbk')def get_doc_id(url): return re.findall('view/(.*).html', url)[0]def parse_type(content): return re.findall(r"docType.*?\:.*?\'(.*?)\'\,", content)[0]def parse_title(content): return re.findall(r"title.*?\:.*?\'(.*?)\'\,", content)[0]def parse_doc(content): result = '' url_list = re.findall('(https.*?0.json.*?)\\\\x22}', content) url_list = [addr.replace("\\\\\\/", "/") for addr in url_list] for url in url_list[:-5]: content = fetch_url(url) y = 0 txtlists = re.findall('"c":"(.*?)".*?"y":(.*?),', content) for item in txtlists: if not y == item[1]: y = item[1] n = '\n' else: n = '' result += n result += item[0].encode('utf-8').decode('unicode_escape', 'ignore') return resultdef parse_txt(doc_id): content_url = 'https://wenku.baidu.com/api/doc/getdocinfo?callback=cb&doc_id=' + doc_id content = fetch_url(content_url) md5 = re.findall('"md5sum":"(.*?)"', content)[0] pn = re.findall('"totalPageNum":"(.*?)"', content)[0] rsign = re.findall('"rsign":"(.*?)"', content)[0] content_url = 'https://wkretype.bdimg.com/retype/text/' + doc_id + '?rn=' + pn + '&type=txt' + md5 + '&rsign=' + rsign content = json.loads(fetch_url(content_url)) result = '' for item in content: for i in item['parags']: result += i['c'].replace('\\r', '\r').replace('\\n', '\n') return resultdef parse_other(doc_id): content_url = "https://wenku.baidu.com/browse/getbcsurl?doc_id=" + doc_id + "&pn=1&rn=99999&type=ppt" content = fetch_url(content_url) url_list = re.findall('{"zoom":"(.*?)","page"', content) url_list = [item.replace("\\", '') for item in url_list] if not os.path.exists(doc_id): os.mkdir(doc_id) for index, url in enumerate(url_list): content = session.get(url).content path = os.path.join(doc_id, str(index) + '.jpg') with open(path, 'wb') as f: f.write(content) print("图片保存在" + doc_id + "文件夹")def save_file(filename, content): with open(filename, 'w', encoding='utf8') as f: f.write(content) print('已保存为:' + filename)# test_txt_url = 'https://wenku.baidu.com/view/cbb4af8b783e0912a3162a89.html?from=search'# test_ppt_url = 'https://wenku.baidu.com/view/2b7046e3f78a6529657d5376.html?from=search'# test_pdf_url = 'https://wenku.baidu.com/view/dd6e15c1227916888586d795.html?from=search'# test_xls_url = 'https://wenku.baidu.com/view/eb4a5bb7312b3169a551a481.html?from=search'def main(): url = input('请输入要下载的文库URL地址') content = fetch_url(url) doc_id = get_doc_id(url) type = parse_type(content) title = parse_title(content) if type == 'doc': result = parse_doc(content) save_file(title + '.txt', result) elif type == 'txt': result = parse_txt(doc_id) save_file(title + '.txt', result) else: parse_other(doc_id)if __name__ == "__main__": main() |

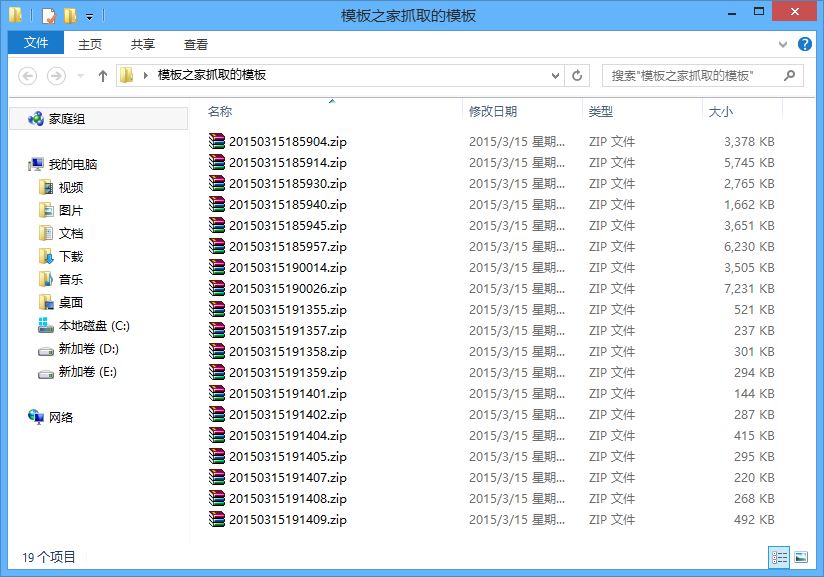

爬取结果

以上就是python 爬取百度文库并以下载的详细内容,更多关于python 爬取百度文库的资料请关注服务器之家其它相关文章!

原文链接:https://github.com/Jack-Cherish/python-spider